Waypoint-1.5 Brings Real-Time AI Worlds to Everyday GPUs

Higher-fidelity generative worlds are now accessible across consumer hardware with streaming and local execution.

Raising A Biome: The Trials and Tribulations of Waypoint At Home

At Overworld, we want you to play our open-weight world models on your very own computer, and Biome is our solution: a cross-platform, fully-integrated, open-source client. But how'd we build it? And what challenges did we run into?

Engineering Safety for Interactive World Models

Safety at Overworld starts well before a prompt reaches the model. From dataset filtering to runtime safeguards and open releases, we’ve been building a layered safety system across the entire pipeline. This post is not intended to weigh in on broader moral or philosophical debates surrounding AI.

Transforming Prompt to Worlds

Our prompting pipeline rewrites user input into structured signals the world model actually understands: seed images, synthetic video, and aligned controls. It started as a safety filter. It became the other half of the product.

Diffusion Tokenizers: Diffusion Decoders Upgraded!

GANs are tough. Diffusion is simple. Diffusion VAEs are the next step in controllable HD reconstructions.

The Immersion Gap

In a race to maximize visual fidelity, the fun factor of world models has suffered. We've arrived in an era where a full second of latency is considered playable, 4fps is considered real-time, and a rack of $50,000 GPUs is considered accessible. We want to fix this—and Project Genie reminds us why it matters.

The Path to Real-Time Worlds and Why It Matters

Today we're releasing Waypoint-1, the first real time diffusion world model optimized for consumer GPUs.

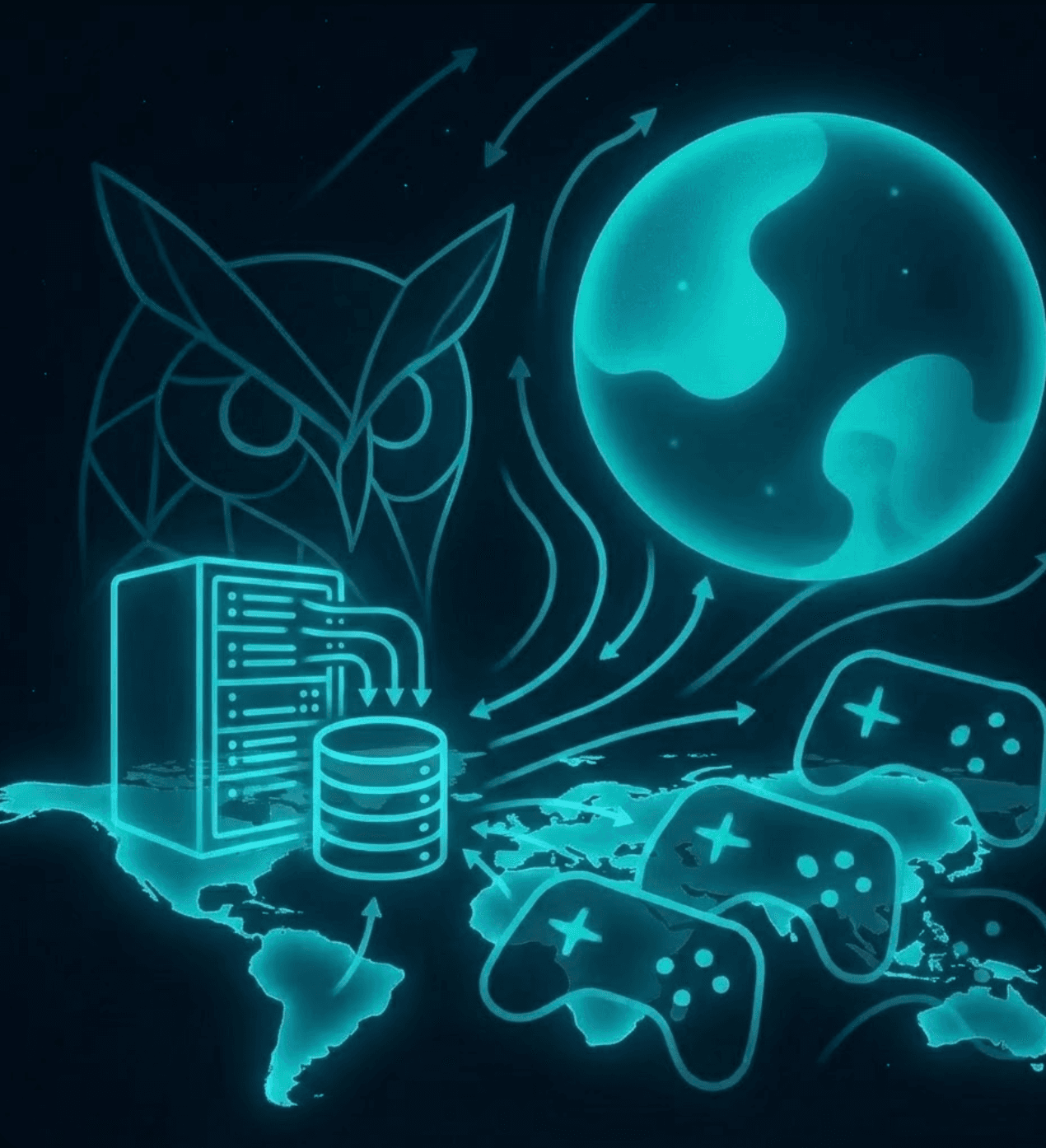

Scaling Up Data Collection: Lessons from OWL Control and OWL Tube

We needed a bunch of annotated game data, we were in a hurry, and we made it happen as fast as we possibly could. It was tricky, we broke a lot of things, and it worked. Here's what we did, what worked and what we broke.

Optimizing World Model Inference Speed with Quantization

At its heart, the post tackles a critical bottleneck in large-scale transformer-based generative models: the KV cache. During inference, this cache stores the context from previous steps, but it can grow very large, consuming memory and hitting bandwidth limits as data is repeatedly read by the GPU. In this blog post, we detail how we address this via quantization and other optimization techniques.

Why Human Evaluation is the Missing Piece in World Model Development

We're releasing OWL Eval, the first open-source evaluation platform built specifically for studying how humans perceive AI-generated videos. After running studies with hundreds of participants, we've learned that human evaluation reveals critical model failures that automated metrics completely miss. Our platform makes it dead simple to run these studies at scale.

Optimizing Diffusion Decoders with Depth Pruning (Using ODE Regression)

We show applying ODE regression to drastically reduce the depth of our diffusion decoder, leading to a 40x speedup!

Product of Experts for Visual Generation - An Illustrated Example

In this blog post, we illustrate a paper that leverages multiple specialist models and incorporating their individual expertise by having them influence the diffusion sampling at inference time. We also provide code examples, visualizations, and intuitions!

Fast Audio Video World Models: Part 2

We trained an autoencoder with depth maps in the latent. It resulted in far better depth consistency in downstream generations. Next we’re training with optical flow as well, and solving the KV cache problem

Generation vs. Reconstruction: Striking A Balance

The generation vs reconstruction trade-off gets weird when you push compression. Learn more about how we're managing it in this blog post!

Fast Audio Video World Models: Attempt 1

Autoencoders for Diffusion: A Deep Dive

Join us as we try to figure out how to make a good custom autoencoder for our World Model.

Towards an Even Larger Video Game Dataset: Inverse Dynamics Models For Bootstrapping Unlabelled Data

This week we set our sights on taming unlabeled internet data for World Model training.

Towards a Large Open Video Game Dataset

Today we are marking the start of our journey towards a general purpose open source video game world model.